Secure messaging... with patients in control of their data

Don’t we all want clinical information systems that support both clinicians and patients with clinical care?

However, storing and using information relating to patients, whether for direct clinical care, audit and service evaluations or the secondary use of data is potentially fraught with difficulty. There are many examples such as the furore surrounding the care.data NHS England project or the TPP SystmOne debacle.

As I have mentioned before, “a single misstep in either the use of data, the perceived use of data or lack of clarity surrounding either has the potential to close down any programme, however innovative.”

How then can we build systems that are open and transparent and yet secure-by-design?

For many, these goals are mutually exclusive. There are many proprietary, closed systems adopting security-by-obscurity with data locked away inaccessible to third parties and low levels of interoperability. Opening up access to such systems is, by definition, something that reduces security and potentially allows important personal patient-identifiable information to be accessed inappropriately.

How do you give secure and safe control of data to patients?

I would argue that more harm comes to patients for lack of sharing of information than excessive sharing. So many of my patients are surprised that I have not and cannot see the results of investigations and clinical opinions from other centres. Others are surprised to know that that most of us have no automatic systems to let us know about results, relying instead on crude manual systems and the receipt of a report in order to act. Such processes are prone to error; what happens if I do not receive a written report for a scan?

As I have written before, we need systems that allow medicine to run on rails. Therefore, we need to open-up data access but in a verifiably safe, transparent and secure way.

The solution is to put the patient at the centre of what we do and to give them control over the use of their data.

The challenge then becomes how to allow patients to either opt-in or opt-out of the use of their data for different purposes in an open way as well as view for themselves how their data is used. We need systems that record patient consent and log access to data.

The danger of information leakage

It is quite straightforward to create electronic systems that record a patient’s preferences and choices in a database.

For example, Mr Smith might record his interest in research generally and specifically sign up for projects relating to diabetes mellitus and HIV. However, such a naive implementation would result in information leakage from this metadata. We might encrypt the database, secure the server and yet still be at risk of disclosing private medical information inadvertently as a result of how different pieces of data are linked.

Secure messaging

Similar issues arise in secure patient messaging. My patients and their advocates want to be able to contact their healthcare teams electronically. The success of my existing EPR messaging solution is evidence that my other clinical colleagues want ways of communicating among themselves but ensure that such messages become part of the wider health record.

We receive emails frequently from patients and relatives, and yet these communications are not stored as part of the health record, are not easily shared with other members of the healthcare team and are inherently insecure. Providing systems to allow secure messaging between patients and their clinical services should be an easy-win for making our services more responsive to patients.

However, I have previously put off adding patient access and secure messaging to my internet hosted EPR portal as I simply do not want to risk the inadvertent leak of patient information, either through accidental or malicious means. It is not difficult to envisage a system much like we have for online banking in which messages are sent and received through their dedicated website or application but what if it got hacked?

We can solve some of these issues with encryption but messaging and other forms of communication are also subject to information leakage as a result of the metadata relating to those communications. The security services don’t necessarily need to know what messages contain to make important inferences about the relationships between people; they know the sender and the recipient.

For example if a patient emails [email protected] then information leakage is taking place.

openconsent

I’ve been working on a small proof-of-concept application that demonstrates a way of connecting patients and services without leaking information about those relationships. It is called openconsent and the source code is available on github.

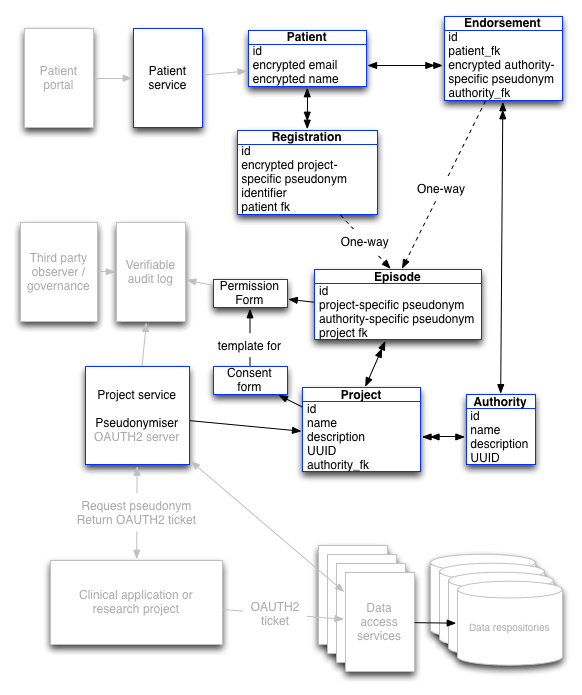

The following schematic shows the model:

This looks complicated, but it really isn’t. The blue boxes show completed functionality within the proof-of-concept project with greyed out boxes showing work that is yet to be done.

More technical information can be found in my previous two blog posts here and here.

The answer comes down to using pseudonymous identifiers to create one-way links between different types of data. This results in a system the prevents data linkage unless you already know the patient details and have permission to access data. The technical underpinnings are described in my other posts so I won’t duplicate that discussion here.

The same technology can be used to implement secure messaging. Messages sent between different people and organisations suffer from the same information leakage problem as a result of the metadata required to send that message, even if the content of the message is encrypted.

I am now working on adding secure message functionality using public-key cryptography to encrypt the content of messages and one-way pseudonymous identifiers to encrypt the metadata relating to those messages in a similar way to the obfuscation of opt-in and opt-out preferences within the consent model.

For technical readers, a review of some of the unit tests will demonstrate the use of the framework.

The immediate next steps for development are:

- add secure messaging

- complete the REST API (although much is already complete)

- add OAUTH2 server

Mark